ViolinBrush: Real-Time Music-to-Painting Robot

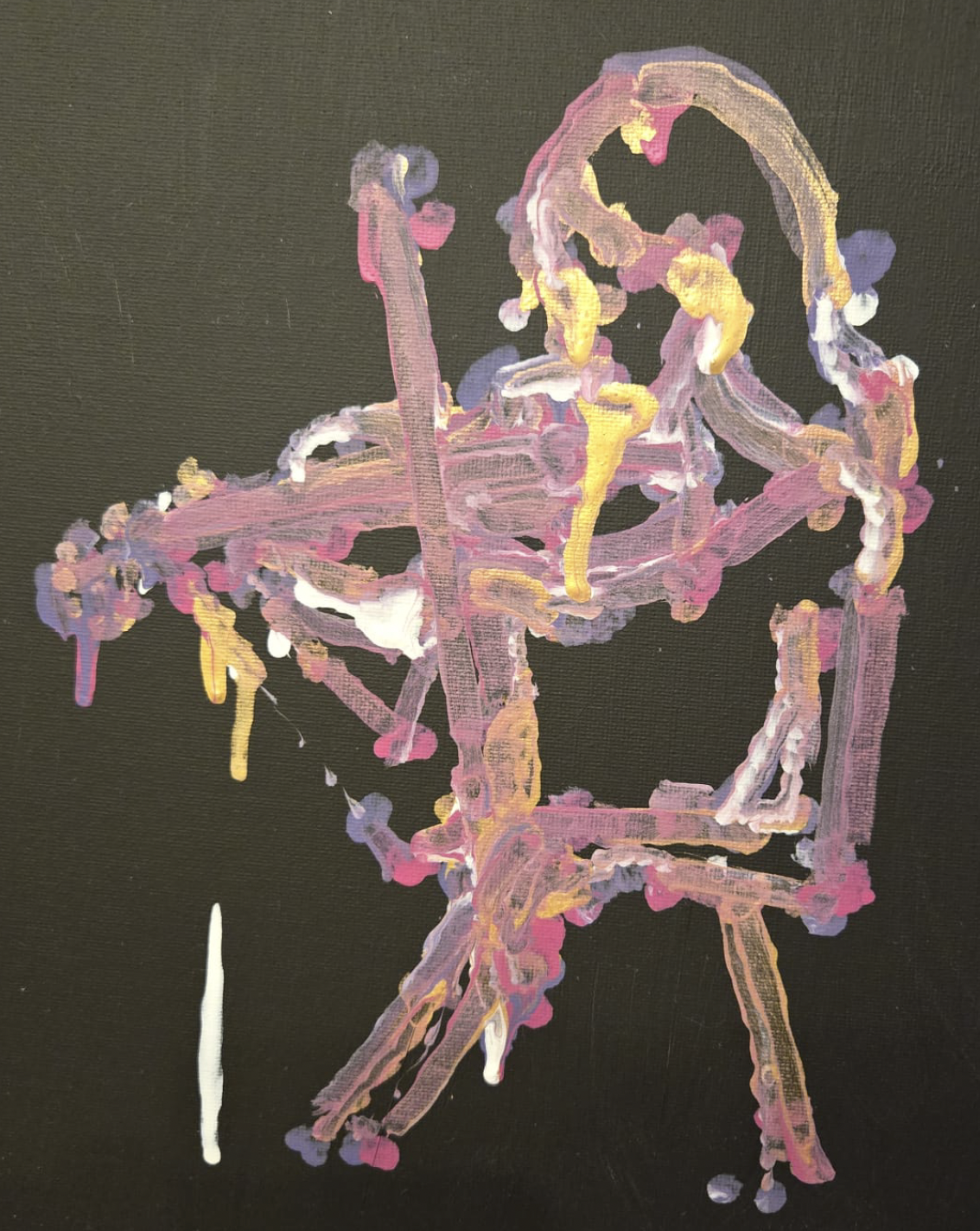

An embodied AI system that translates live musical performance into robotic paintings in real-time, converting pitch, onset, and amplitude into physical brush gestures.

Published at ACM Human-AI Interaction (HAI) Conference. Demonstrated real-time multimodal translation between sound and physical generative output.

ViolinBrush is an embodied AI system that translates live musical features into robotic painting gestures in real-time. The system demonstrates multimodal translation between sound perception and physical generative output.

Technical Architecture

- Max/MSP for real-time audio analysis and feature extraction

- Python for gesture planning and robot control

- OSC (Open Sound Control) for low-latency communication between audio and control systems

- Real-time extraction of musical features: pitch, onset detection, and amplitude envelope

Approach

The system maps musical features to painting parameters: pitch influences color selection, onset timing drives brush stroke initiation, and amplitude controls stroke pressure and scale. The robot executes these gestures on a physical canvas, creating a visual record of the musical performance.

Research Contribution

Published at the ACM Human-AI Interaction (HAI) Conference, contributing to the emerging field of embodied creative AI systems that bridge auditory perception and physical creative action.